Sitemap

A list of all the posts and pages found on the site. For you robots out there, there is an XML version available for digesting as well.

Pages

Posts

Future Blog Post

Published:

This post will show up by default. To disable scheduling of future posts, edit config.yml and set future: false.

Blog Post number 4

Published:

This is a sample blog post. Lorem ipsum I can’t remember the rest of lorem ipsum and don’t have an internet connection right now. Testing testing testing this blog post. Blog posts are cool.

Blog Post number 3

Published:

This is a sample blog post. Lorem ipsum I can’t remember the rest of lorem ipsum and don’t have an internet connection right now. Testing testing testing this blog post. Blog posts are cool.

Blog Post number 2

Published:

This is a sample blog post. Lorem ipsum I can’t remember the rest of lorem ipsum and don’t have an internet connection right now. Testing testing testing this blog post. Blog posts are cool.

Blog Post number 1

Published:

This is a sample blog post. Lorem ipsum I can’t remember the rest of lorem ipsum and don’t have an internet connection right now. Testing testing testing this blog post. Blog posts are cool.

portfolio

Portfolio item number 1

Short description of portfolio item number 1

Portfolio item number 2

Short description of portfolio item number 2

publications

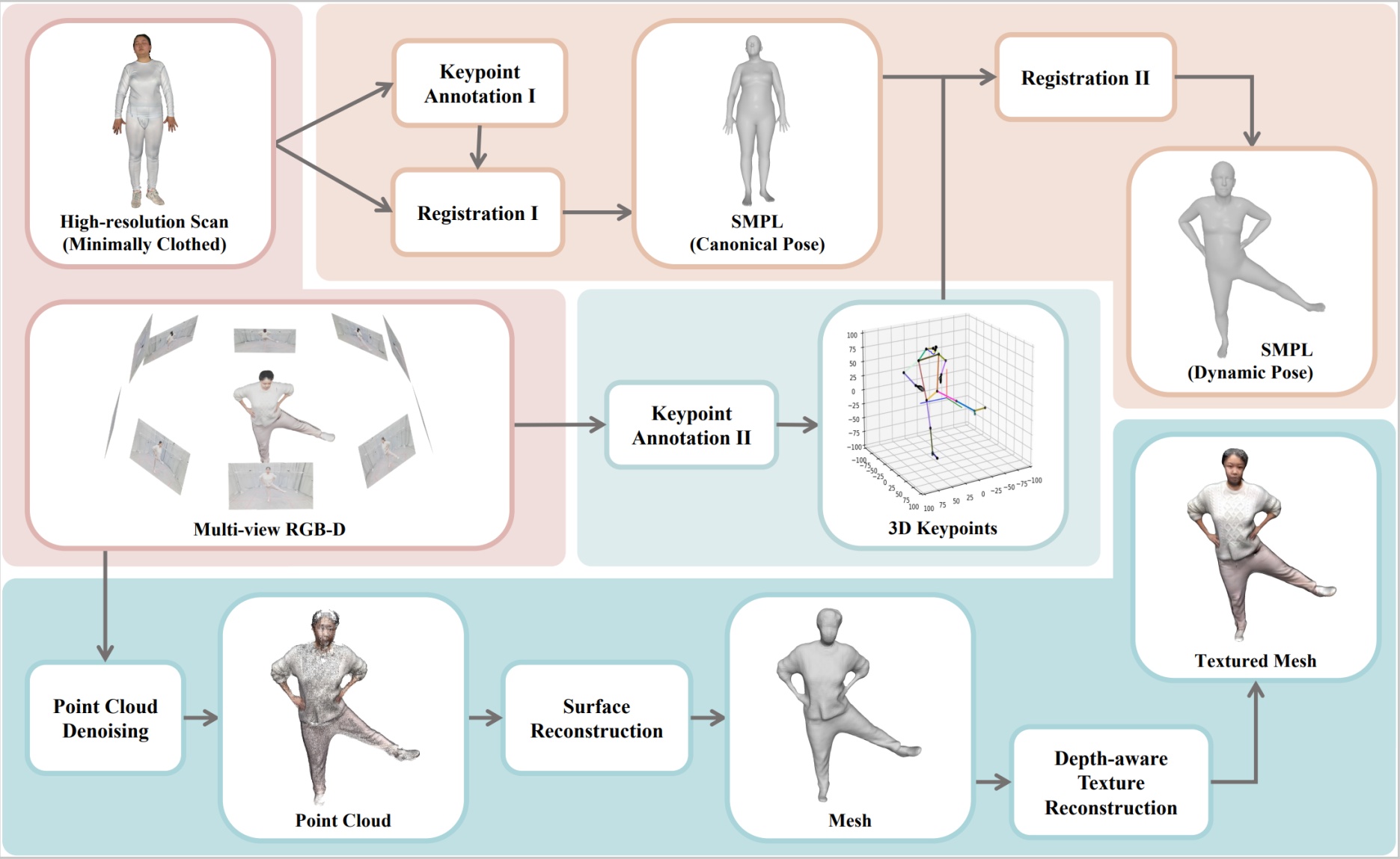

HuMMan: Multi-Modal 4D Human Dataset for Versatile Sensing and Modeling

BibTeX

@inproceedings{cai2022humman,

title={HuMMan: Multi-Modal 4D Human Dataset for Versatile Sensing and Modeling},

author={Cai, Zhongang and Ren, Daxuan and Zeng, Ailing and Lin, Zhengyu and Yu, Tao and Wang, Wenjia and Fan, Xiangyu and Gao, Yang and Yu, Yifan and Pan, Liang and Hong, Fangzhou and Zhang, Mingyuan and Loy, Chen Change and Yang, Lei and Liu, Ziwei},

booktitle={ECCV},

year={2022}

}

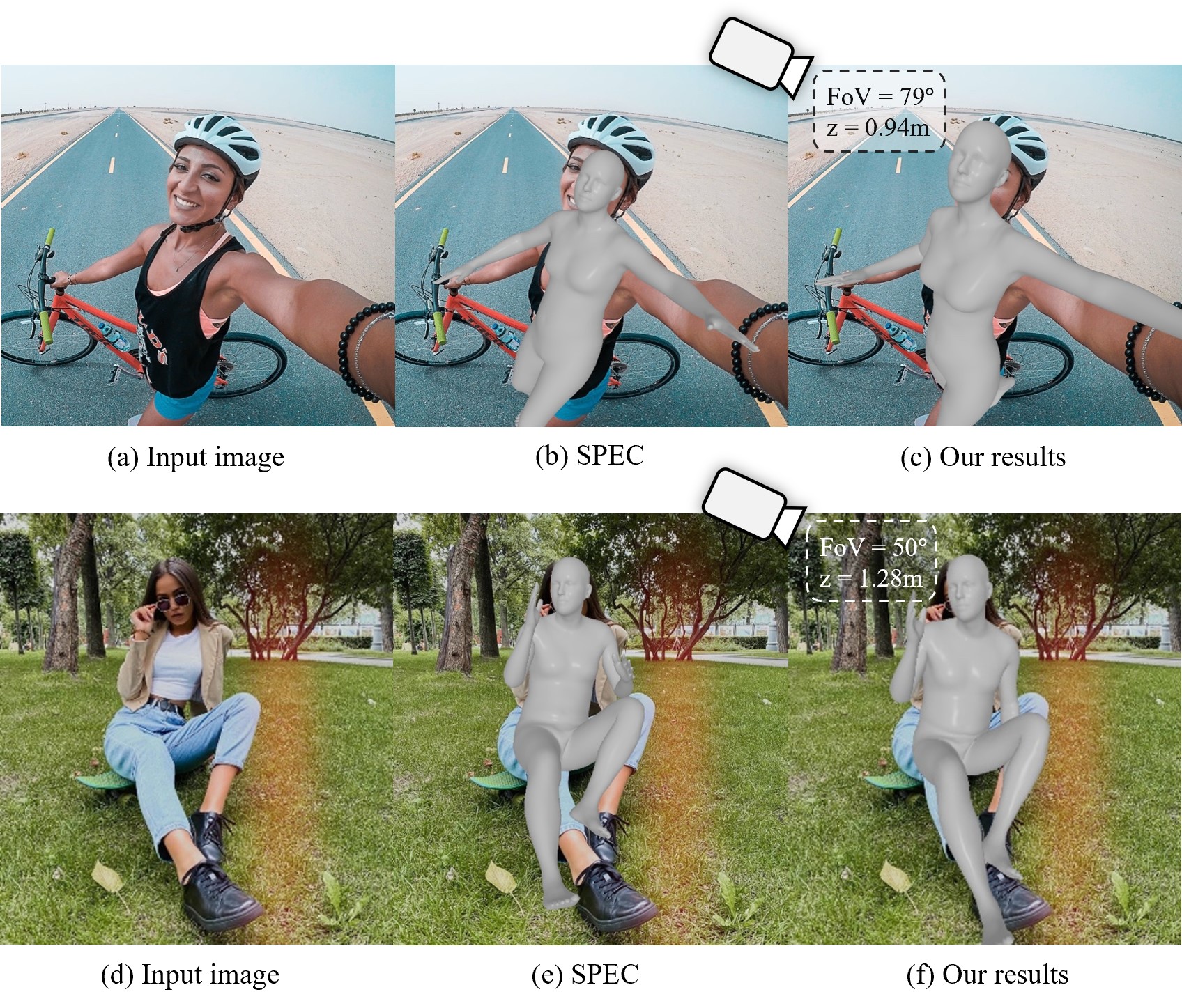

Zolly: Zoom Focal Length Correctly for Perspective-Distorted Human Mesh Reconstruction

BibTeX

@inproceedings{wang2023zolly,

title={Zolly: Zoom Focal Length Correctly for Perspective-Distorted Human Mesh Reconstruction},

author={Wang, Wenjia and Ge, Yongtao and Mei, Haiyi and Cai, Zhongang and Sun, Qingping and Wang, Yanjun and Shen, Chunhua and Yang, Lei and Komura, Taku},

booktitle={ICCV},

year={2023}

}

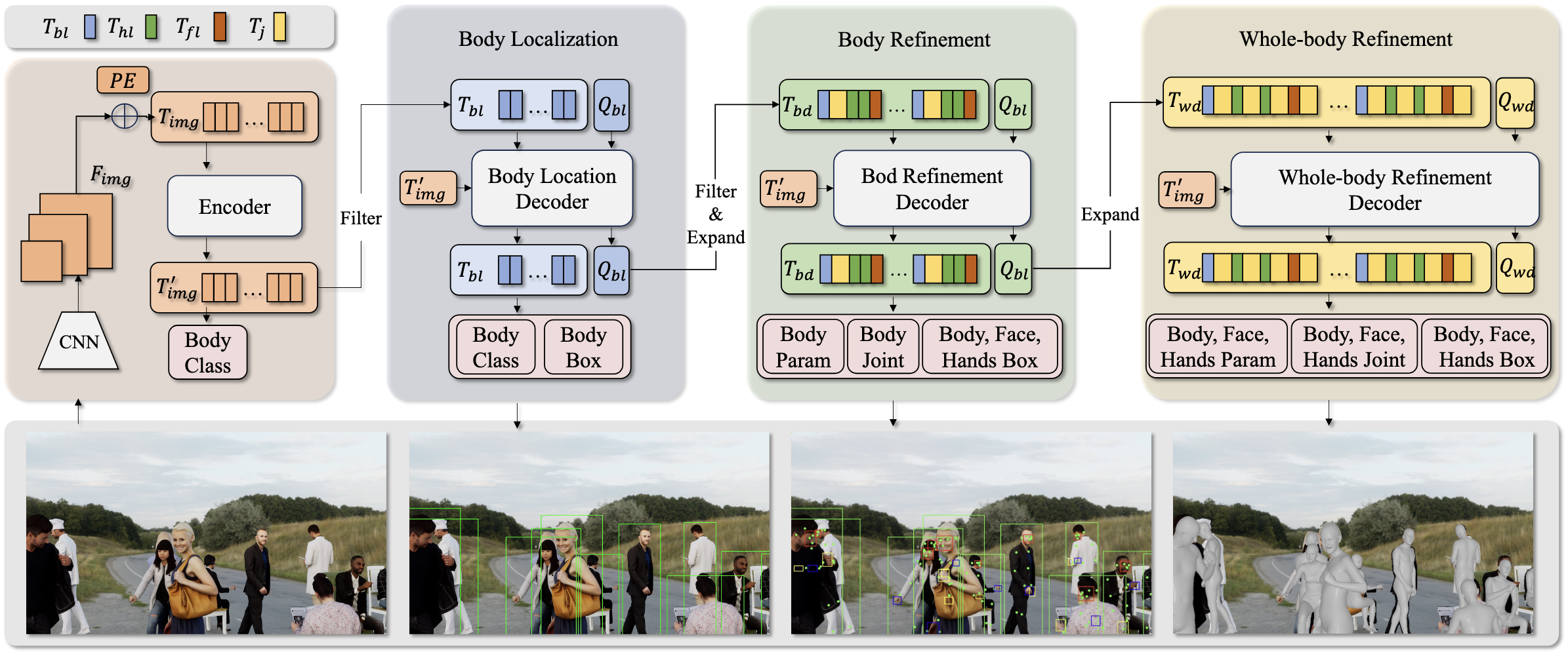

AiOS: All-in-One-Stage Expressive Human Pose and Shape Estimation

BibTeX

@inproceedings{sun2024aios,

title={AiOS: All-in-One-Stage Expressive Human Pose and Shape Estimation},

author={Sun, Qingping and Wang, Yanjun and Zeng, Ailing and Yin, Wanqi and Wei, Chen and Wang, Wenjia and Mei, Haiyi and Leung, Chi Sing and Liu, Ziwei and Yang, Lei and Cai, Zhongang},

booktitle={CVPR},

year={2024}

}

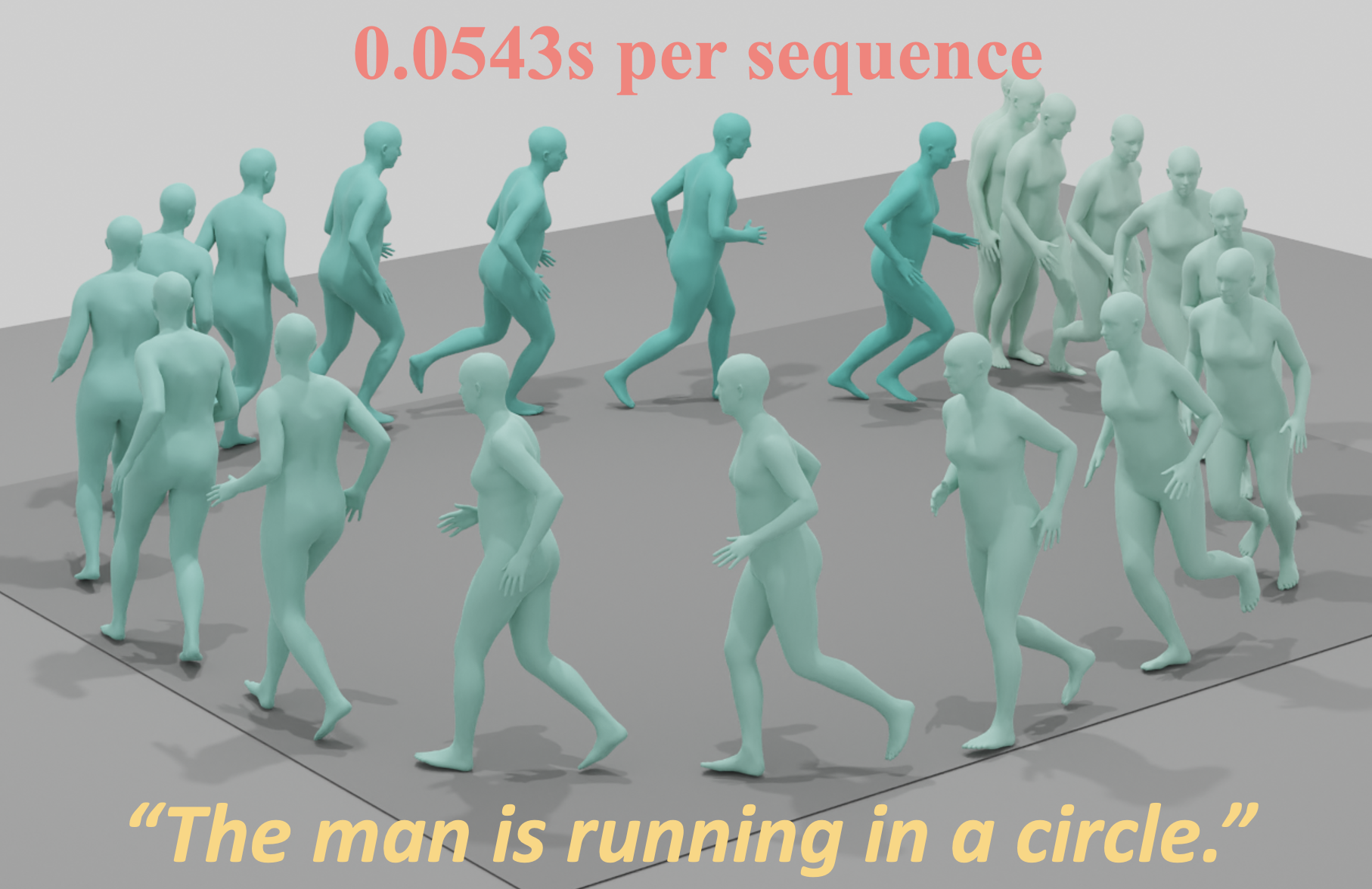

EMDM: Efficient Motion Diffusion Model for Fast, High-Quality Human Motion Generation

BibTeX

@inproceedings{zhou2024emdm,

title={EMDM: Efficient Motion Diffusion Model for Fast, High-Quality Human Motion Generation},

author={Zhou, Wenyang and Dou, Zhiyang and Cao, Zeyu and Liao, Zhouyingcheng and Wang, Jingbo and Wang, Wenjia and Liu, Yuan and Komura, Taku and Wang, Wenping and Liu, Lingjie},

booktitle={ECCV},

year={2024}

}

RMD: A Simple Baseline for More General Human Motion Generation via Training-free Retrieval-Augmented Motion Diffuse

BibTeX

@article{liao2024rmd,

title={RMD: A Simple Baseline for More General Human Motion Generation via Training-free Retrieval-Augmented Motion Diffuse},

author={Liao, Zhouyingcheng and Zhang, Mingyuan and Wang, Wenjia and Yang, Lei and Komura, Taku},

journal={arXiv preprint arXiv:2412.04343},

year={2024}

}

SOFT: Synthetic High-Quality Dataset for Fish Tracking with Multiple Camera Views

BibTeX

@inproceedings{wu2025soft,

title={SOFT: Synthetic High-Quality Dataset for Fish Tracking with Multiple Camera Views},

author={Wu, Yifan and Wang, Wenjia and Dou, Zhiyang and Lou, Yuke and Xu, Rui and Konrad, Janusz and Wang, Wenping and Komura, Taku},

booktitle={arXiv},

year={2025}

}

Zero-Shot Human-Object Interaction Synthesis with Multimodal Priors

BibTeX

@inproceedings{lou2025zerohoi,

title={Zero-Shot Human-Object Interaction Synthesis with Multimodal Priors},

author={Lou, Yuke and Wang, Yiming and Wu, Zhen and Zhao, Rui and Wang, Wenjia and Shi, Mingyi and Komura, Taku},

booktitle={arXiv},

year={2025}

}

TokenHSI: Unified Synthesis of Physical Human-Scene Interactions through Task Tokenization

BibTeX

@inproceedings{pan2025tokenhsi,

title={TokenHSI: Unified Synthesis of Physical Human-Scene Interactions through Task Tokenization},

author={Pan, Liang and Yang, Zeshi and Dou, Zhiyang and Wang, Wenjia and Huang, Buzhen and Dai, Bo and Komura, Taku and Wang, Jingbo},

booktitle={CVPR},

year={2025}

}

SIMS: Simulating Stylized Human-Scene Interactions with Retrieval-Augmented Script Generation

BibTeX

@inproceedings{wang2025sims,

title={SIMS: Simulating Stylized Human-Scene Interactions with Retrieval-Augmented Script Generation},

author={Wang, Wenjia and Pan, Liang and Dou, Zhiyang and Mei, Jidong and Liao, Zhouyingcheng and Lou, Yuke and Wu, Yifan and Yang, Lei and Wang, Jingbo and Komura, Taku},

booktitle={ICCV},

year={2025}

}

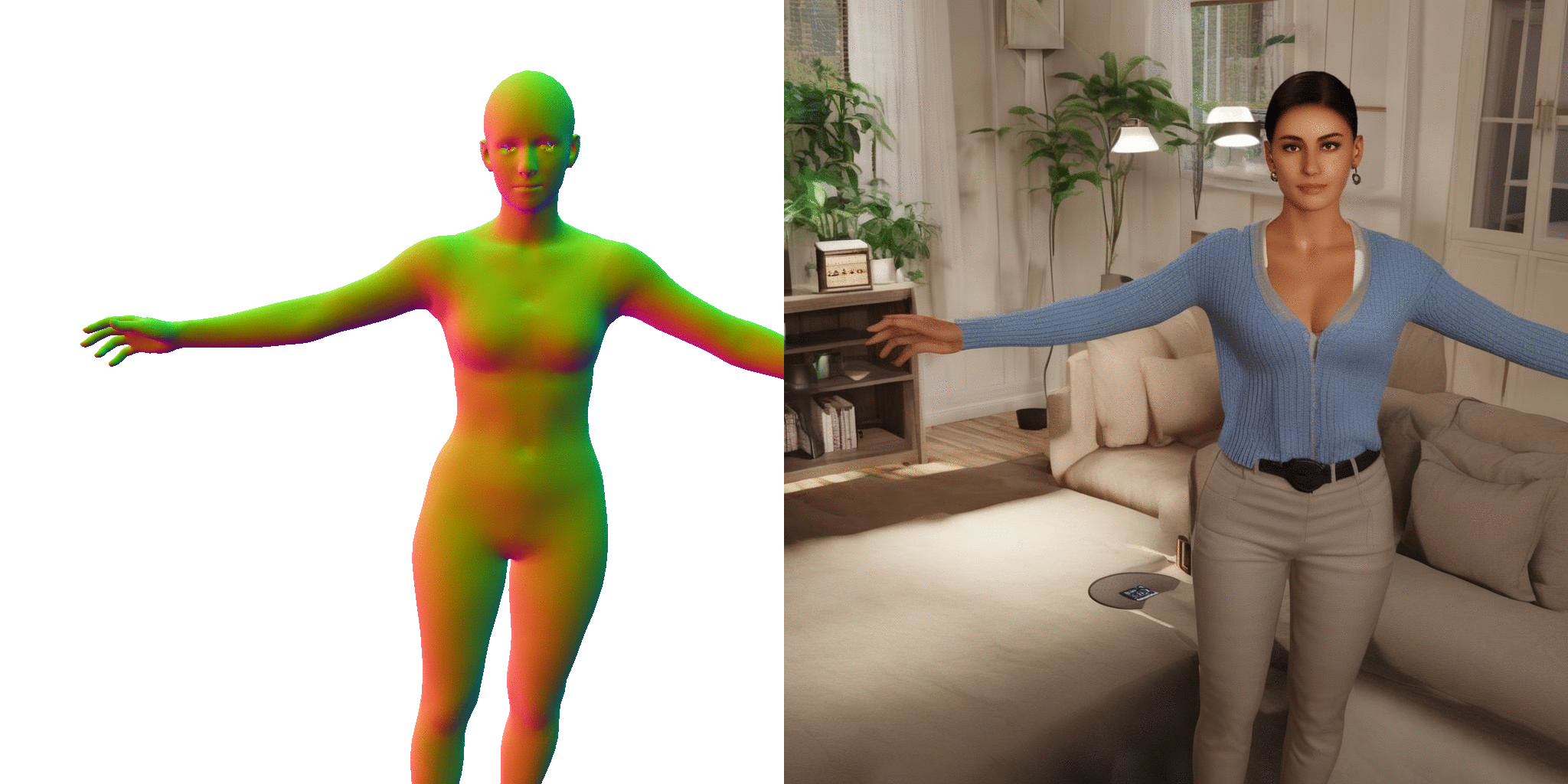

3D Human Reconstruction in the Wild with Synthetic Data Using Generative Models

BibTeX

@inproceedings{ge2024humanwild,

title={3D Human Reconstruction in the Wild with Synthetic Data Using Generative Models},

author={Ge, Yongtao and Wang, Wenjia and Chen, Yongfan and Wang, Fanzhou and Yang, Lei and Chen, Hao and Shen, Chunhua},

booktitle={TPAMI},

year={2025}

}

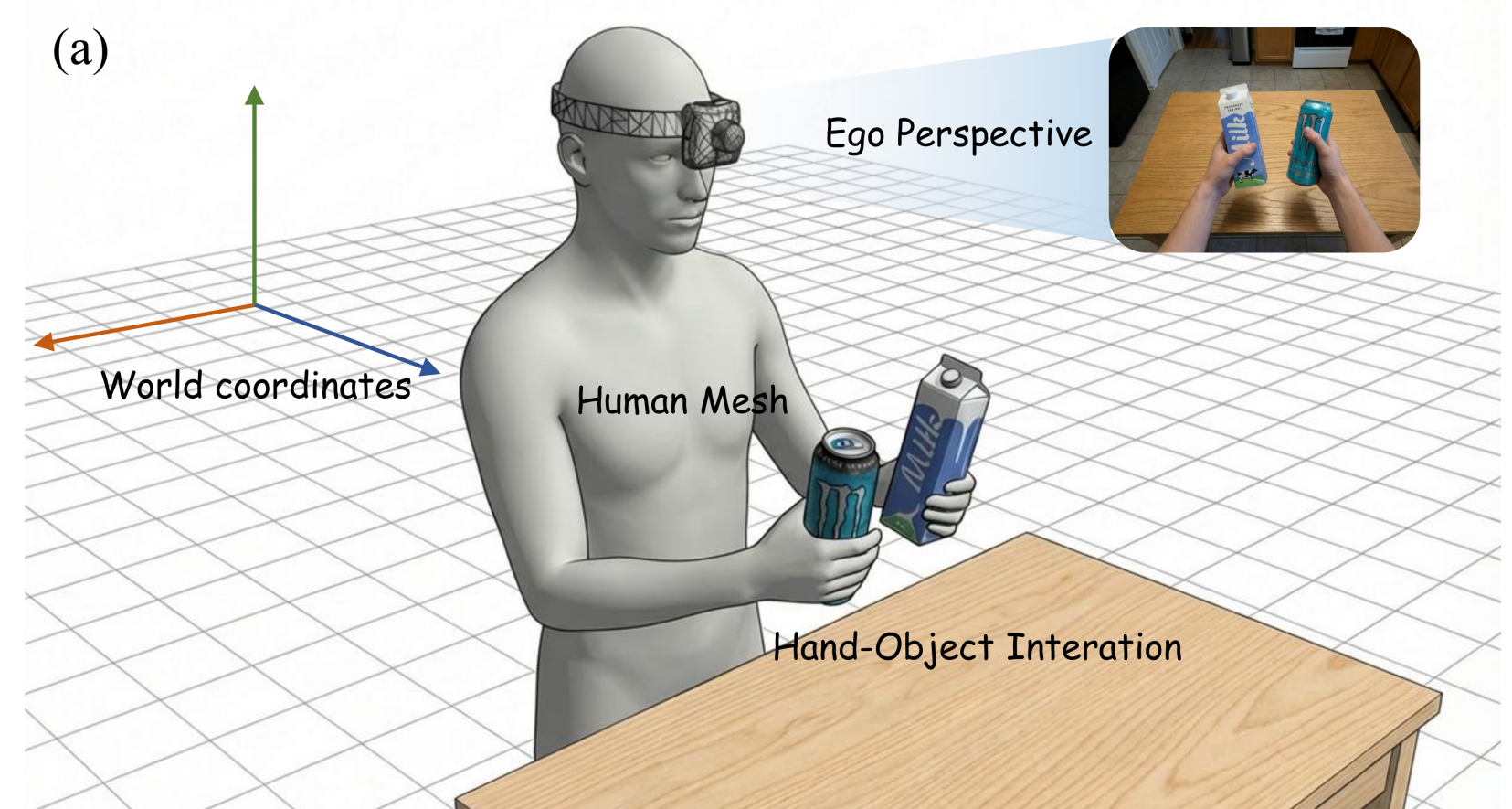

EgoGrasp: World-Space Hand-Object Interaction Estimation from Egocentric Videos

BibTeX

@inproceedings{fu2026egograsp,

title={EgoGrasp: World-Space Hand-Object Interaction Estimation from Egocentric Videos},

author={Fu, Hongming and Wang, Wenjia and Qiao, Xiaozhen and Yang, Shuo and Liu, Zheng and Zhao, Bo},

booktitle={arXiv},

year={2026}

}

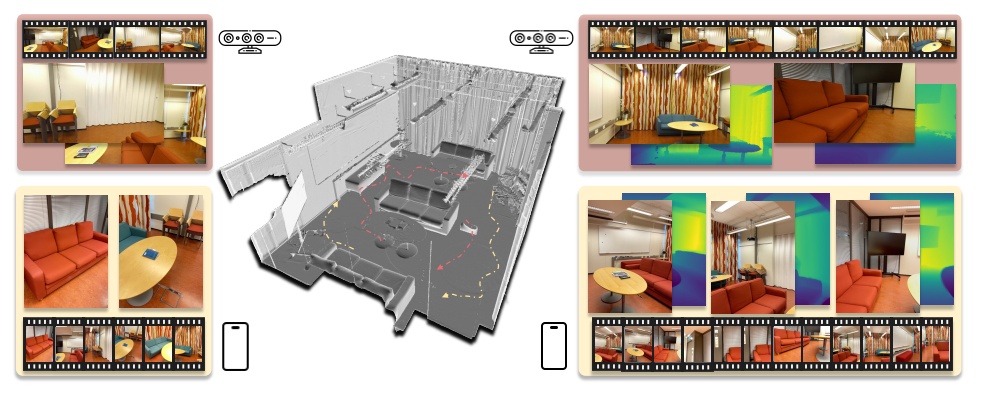

EmbodMocap: In-the-Wild 4D Human-Scene Reconstruction for Embodied Agents

BibTeX

@inproceedings{wang2026embodmocap,

title={EmbodMocap: In-the-Wild 4D Human-Scene Reconstruction for Embodied Agents},

author={Wang, Wenjia and Pan, Liang and Pi, Huaijin and Lou, Yuke and Ren, Xuqian and Wu, Yifan and Liao, Zhouyingcheng and Yang, Lei and Dabral, Rishabh and Theobalt, Christian and Komura, Taku},

booktitle={CVPR},

year={2026}

}

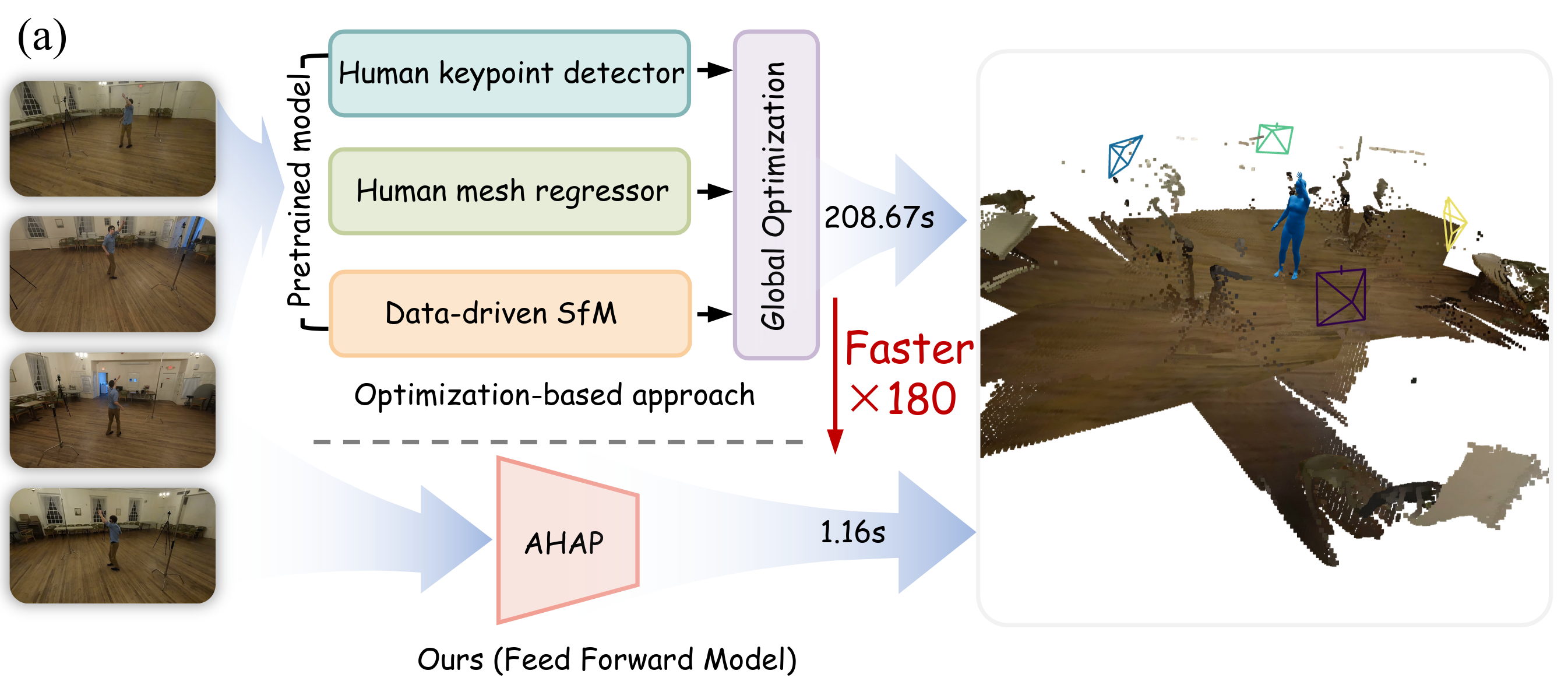

AHAP: Reconstructing Arbitrary Humans from Arbitrary Perspectives with Geometric Priors

BibTeX

@misc{qiao2026ahap,

title={AHAP: Reconstructing Arbitrary Humans from Arbitrary Perspectives with Geometric Priors},

author={Qiao, Xiaozhen and Wang, Wenjia and Zhao, Zhiyuan and Sun, Jiacheng and Luo, Ping and Zhang, Hongyuan and Li, Xuelong},

year={2026},

eprint={2602.23951},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2602.23951}

}

talks

Talk 1 on Relevant Topic in Your Field

Published:

This is a description of your talk, which is a markdown file that can be all markdown-ified like any other post. Yay markdown!

Conference Proceeding talk 3 on Relevant Topic in Your Field

Published:

This is a description of your conference proceedings talk, note the different field in type. You can put anything in this field.

teaching

Teaching experience 1

Undergraduate course, University 1, Department, 2014

This is a description of a teaching experience. You can use markdown like any other post.

Teaching experience 2

Workshop, University 1, Department, 2015

This is a description of a teaching experience. You can use markdown like any other post.